How Does Slickstream Index Site Content?

We are often asked about how Slickstream builds and maintains an index of each of our customer's sites. And people want to know how fast they should expect our index to be updated after content is added or changed or deleted. If you want these answers, you've come to right place!

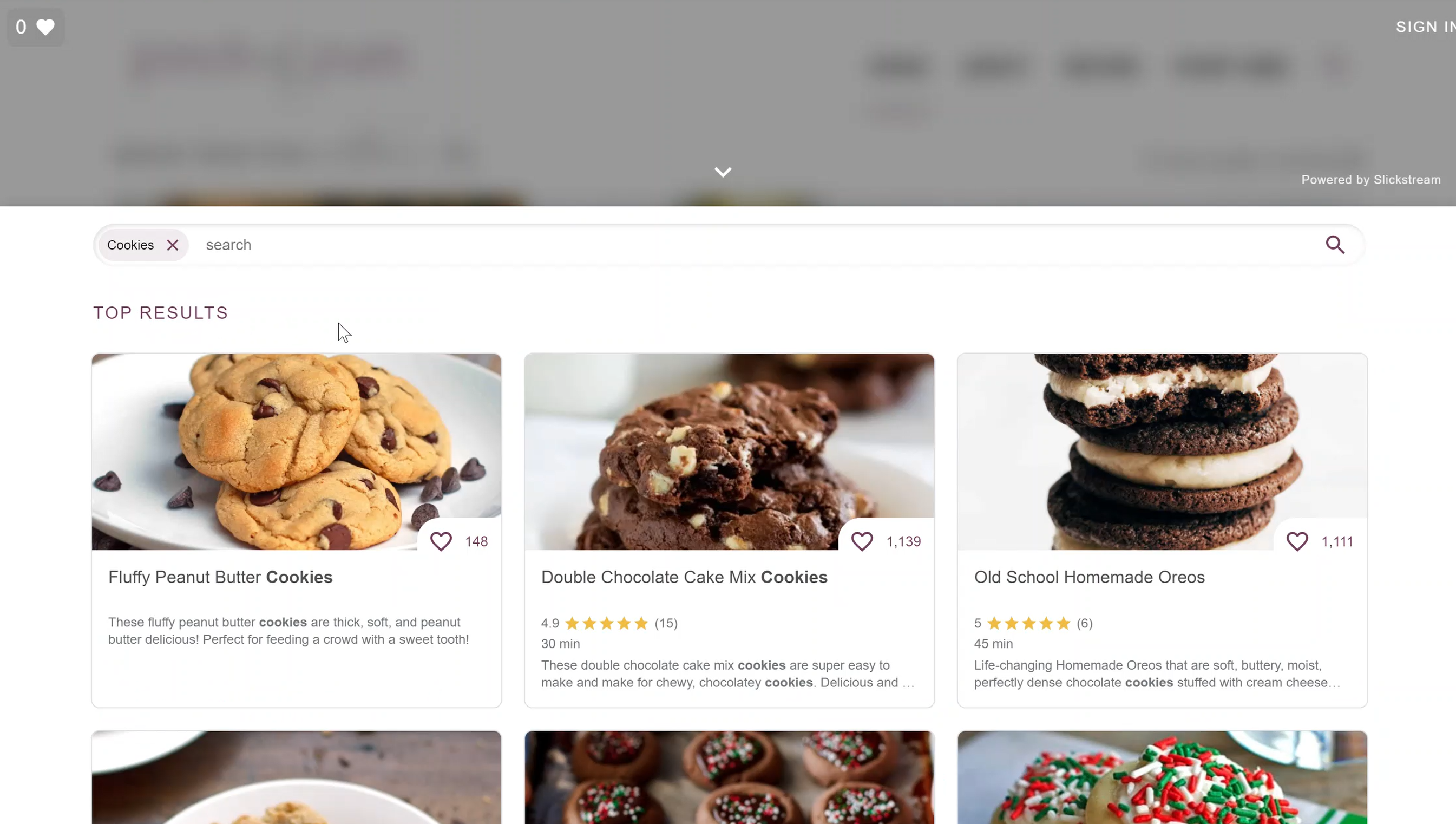

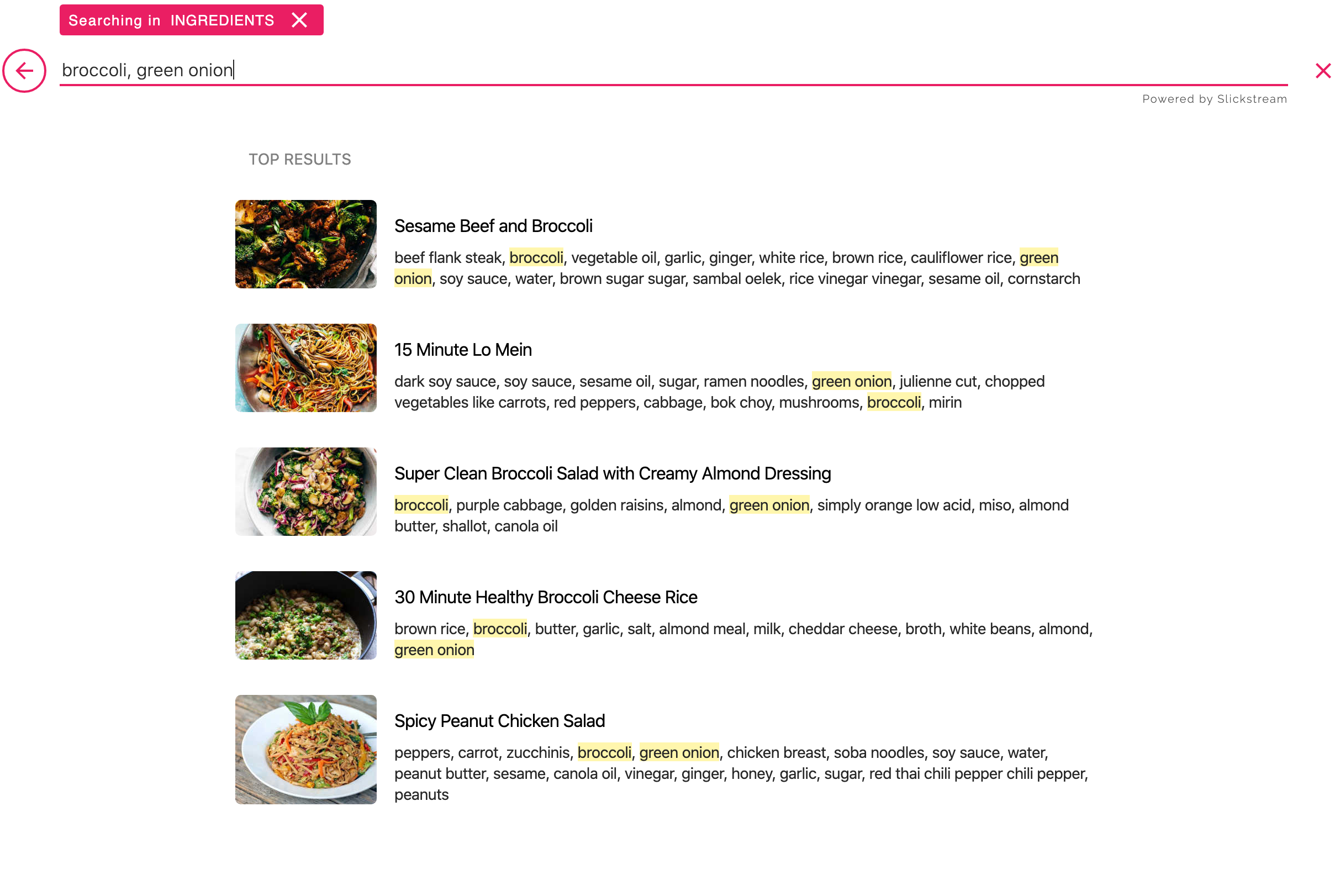

Slickstream is a "cloud service". We maintain our own servers (separate from yours) that handle all of the processing and storage we need to add our features into your site. Those servers do several things. But one of the most important things is to maintain a full index of your site. That index consists of a complete map of your site. We know about every page on your site, and we know a lot about each of these page. That allows us to provide search, recommendations, favorites, and games appropriate to every page and every viewer.

Sitemaps

You may have heard of "crawlers". In essence, Slickstream is a crawler -- however we do not "crawl" in the sense that you may think. (We DO NOT load your home page and then follow every link to other pages, and follow the links on those pages, and so on.)

Our map of your site is based on your map. Your site has a set of files that list all of the content on your site. These are the so-called "sitemap" files. For most sites, you'll find the root sitemap at /sitemap_index.xml relative to your root domain. For example, if you site is at https://worldsbestblog.com, then you may find your sitemaps by going to https://worldsbestblog.com/sitemap_index.xml. (Some sites host their sitemaps elsewhere but we handle most cases automatically.)

We use your sitemaps for three things: a list of all of your pages, the categorization of your site (posts, pages, categories, products, etc.), and timestamps telling us when each page last changed. Whenever we audit your site, we fetch all of your sitemaps and we compare them to the last time we fetched them. From that, we can see the pages that were added, deleted, and modified. We use that list of changes to update our index accordingly. For added and modified pages, we will fetch those pages again and update our index with the new information.

Thumbnails / Featured Images

In order to make our widgets blazingly fast, we don't want to have to load your full-sized images into our filmstrip or search results. That would slow down the experience for your viewers. So when we index your site, we also build thumbnails for all of the images that we may serve up in our widgets. In fact, we build multiple thumbnail variants -- each optimized to a different purpose. If we see that the featured image on one of your pages has changed, we'll build new thumbnails for that page automatically.

Index Frequency

A bit earlier, I mentioned that "when we audit your site", we fetch your sitemaps and update our index accordingly. But when do we do that audit? Obviously, you'd like for our index to remain as up-to-date as possible. When you post some new content, you'd like for it to start appearing in search results and relevant recommendations right away. So how long until the next audit?

First of all, we always audit each site at least once every 12 hours. But that could mean waiting a long time before the index is current after a change! So we audit your site within a minute or two after you make any change to your site. But how is that possible? After all, don't we have to do the audit itself to find out if there have been any changes? No! Remember that Slickstream's embed code is on every one of your pages. So when one of your viewers loads a page in their browser, Slickstream has a chance to look to see if that page is new, or if it has changed. If so, we will know about that right away, and can start an audit immediately.

For normal cases, therefore, after the first pageview on a new or updated page, we will kick off an audit immediately. The auditing work is queued because our servers have to handle this for hundreds or thousands of sites. In most cases, workloads are small and your audit will start promptly -- usually within a minute or two. And the audit itself can take anywhere from a few seconds to several minutes depending on how many changes need to be processed.

Potential Indexing Blockers

There are some cases where indexing pages within Slickstream can be hindered. These instances are not common, but details of the potential issues related to blocking are detailed below.

Multiple Reindex Triggers

If we start to see a lot of triggers happening for the same site in close succession, we will throttle these, and that could slow down auditing. This could delay page updates from being seen in Slickstream as much as a few hours in the worst case.

Page Not in Sitemaps

Another problem we run into are sites where we see a new page but when we run the audit, that page has not yet shown up in the sitemaps. We fight any caching of sitemaps for this reason. But if your sitemaps are slow in updating, this could result in a delay until the next audit period until a new page appears in the sitemap.

Restricted Pages

Yet another issue we deal with are new pages that appear to us as restricted (returning 401 or 403 errors) when we fetch them. This happens if you use a publishing approach where you restrict access to new pages so that only your own site admins can access them. At some point, you remove this restriction for your new page. But we have no way to know when that restriction has been removed. So this is another example of where we may not find the page until the next audit cycle when we find it in the sitemaps, fetch it successfully, and add it to the index.

Sitemap Caching or Other Sitemap Issues

One of the biggest challenges we face are badly behaved sitemaps. Many sites have servers that are underpowered. When you fetch sitemaps, your WordPress SEO plugin builds the sitemaps on the fly, and that takes too long. So we get network timeouts fetching the sitemaps. We retry multiple times. But for underpowered sites, we frequently see failures fetching the maps. We handle this as well as we can. For example, we maintain our own cache of sitemaps for each site. So if we get an error fetching a particular sitemap, we will fall back to using the last cached copy we have. The problem, of course, is that that means we may not be using the latest information about additions and changes. So your new page may not get into the index until your sitemaps are all responding normally.

We do our very best to keep our index as current as possible, to limit the amount of load we add to your servers, and to deal as well as we can with problems when then happen. The goal is to provide the best possible experience for you and your viewers while demanding as little of your attention as possible.

Recommendations

So if you want to get the best possible results from Slickstream on your site, you can help in the following ways:

- Make sure that your site has a complete set of sitemaps that fully describe your content;

- Make sure that your sitemaps have caching disabled -- both from your CDN and from any caching plugin you may use;

- If you restrict access to pages while they are being written, anticipate a delay of at least several hours before those pages will appear in our index;

- Ensure that your servers are not underpowered. Even if you are using a CDN, your servers still need to have enough "muscle" to handle a reasonable workload -- and that includes sitemap processing;

- If you use caching plugins (like WPRocket, LiteSpeed, NitroPack, etc.) be very careful about cases where we might be fetching content that is out of date. In particular, make sure that sitemaps are not cacheable.